At Nerval, inclusive AI is a world-shaping ambition — and meeting that ambition requires disciplined, high-performance engineering. Building AI systems that serve low-resource languages and power sovereign, business-facing AI for organizations requires deep control over data, models, and the infrastructure that binds them together.

Nerval has designed, assembled, and deployed a multi-tiered in-house AI infrastructure spanning development, virtualization, and production environments.

Through the NVIDIA Inception Program, Nerval receives access to advanced NVIDIA platforms, technical insight, and program resources that have helped make this infrastructure both achievable and strategically sustainable.

Rather than relying exclusively on externally hosted compute, Nerval now operates its own infrastructure backbone — intentionally designed around complementary machines with distinct roles that together form a robust, sovereign AI operations platform.

Development Machine: Foundational Model Training and System Design

The Development Machine serves as Nerval's primary environment for building, training, and validating core AI systems. This includes foundational work in text-to-speech (TTS), automatic speech recognition (ASR), and multilingual translation, as well as the development of sovereign, organization-specific AI systems designed to support small and medium-sized businesses, institutions, and local enterprises.

This is where Nerval has done the critical groundwork: developing language models, speech systems, and AI capabilities that reflect real linguistic diversity while also meeting the practical needs of business operations — from productivity assistants to domain-specific automation and interfaces.

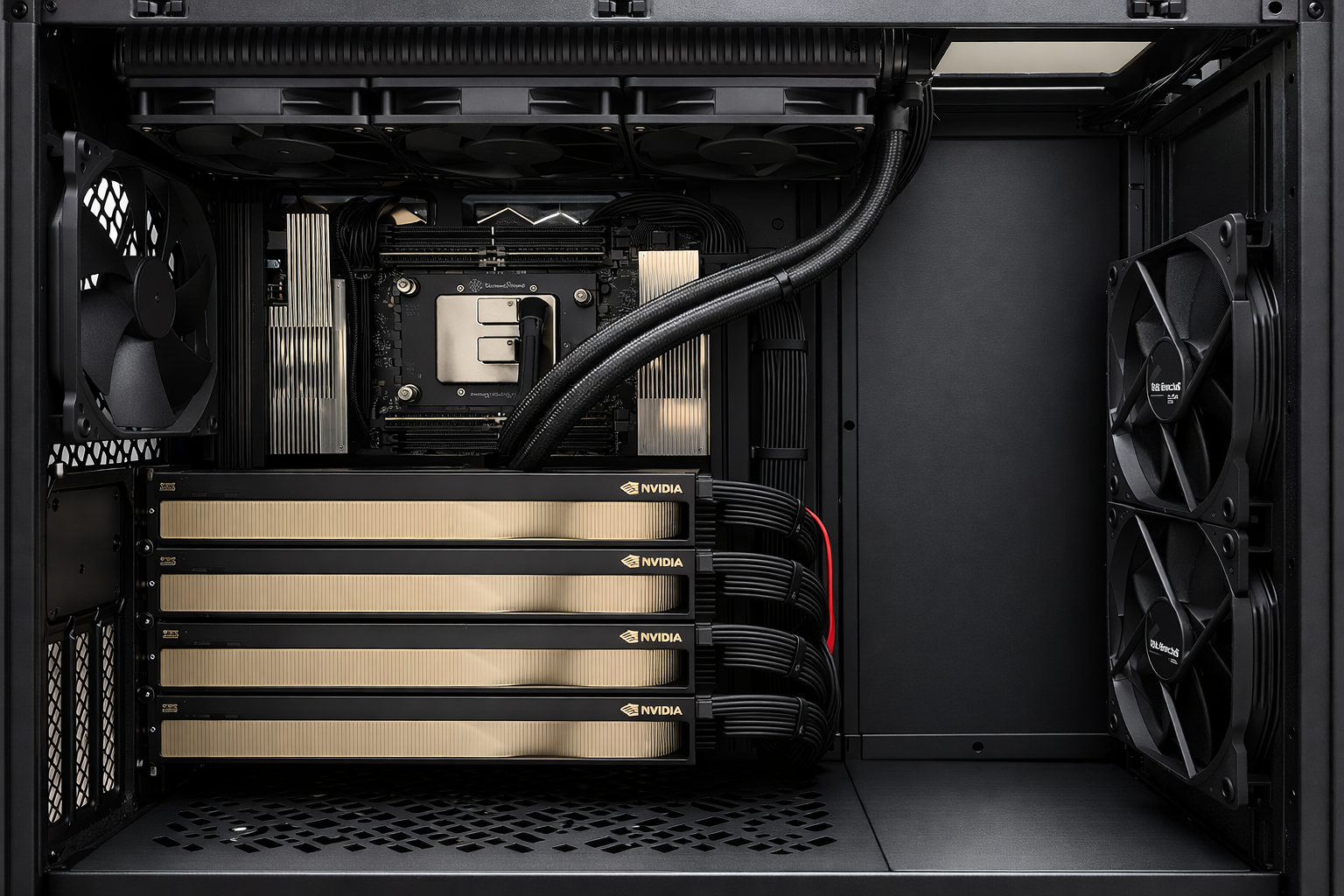

At the system level, the machine is built on an AMD Threadripper PRO high-performance platform, providing a high core count, substantial memory bandwidth, and robust I/O. Large-capacity ECC memory ensures stability for long-running jobs and complex, memory-intensive workloads.

The system is accelerated by several high-end NVIDIA Blackwell GPUs, each offering an unusually large pool of GPU memory. This enables the training and refinement of speech and language models with long temporal contexts, multilingual coverage, and organization-specific adaptations without premature architectural compromises. PCIe Gen 5 connectivity ensures efficient data movement under sustained load.

High-speed NVMe SSDs are used for datasets, model checkpoints, intermediate artifacts, and caches, allowing tight training loops and efficient reuse of prior work. The system runs Ubuntu Linux and integrates with modern AI and ML toolchains, providing a stable and reproducible environment for serious model development.

Virtualization Development Machine: Secure, Scalable Engineering Environments

Complementing direct model training, Nerval operates a dedicated Virtualization Development Machine, based on an AMD Threadripper platform with ECC DDR5 memory, multi-tier SSD storage, and a Proxmox-based virtualization stack.

This system enables Nerval to:

- Create multiple isolated, secure development and test environments

- Safely validate AI services and infrastructure changes before live deployment

- Run virtual machines and containerized services in parallel

- Support infrastructure innovation without disrupting production systems

With dedicated SSD capacity for Proxmox itself, lightweight containers, and data/apps, this virtualization platform allows Nerval's teams to build, simulate, refine, and govern services with discipline and efficiency.

It is an engineering backbone that ensures Nerval can innovate rapidly — without compromising reliability or control.

Production Machine: Multi-GPU Inference for Public and Business AI

The Production Machine is the backbone of Nerval's live AI services. It is designed to support continuous, concurrent inference workloads for both public-facing applications and business-oriented sovereign AI systems.

Built on the same enterprise-grade platform as the development environment, the Production Machine features a higher-core-count AMD Threadripper PRO high-end processor and a large ECC memory footprint to support service orchestration, request handling, monitoring, and logging alongside active inference workloads.

The system is equipped with several high-end NVIDIA Blackwell GPUs connected via PCIe Gen 5. This multi-GPU configuration enables parallel execution of multiple AI services — including speech recognition, translation, voice synthesis, and organization-specific assistants — while maintaining low latency and predictable performance.

Dedicated NVMe storage supports fast model loading, caching, and operational telemetry. The production environment runs a hardened Ubuntu Linux stack tuned for stability, uptime, and controlled evolution as services scale.

Compact AI Supercomputing: Desktop-Scale Breakthrough Power

Completing the infrastructure stack, Nerval is adding a compact, next-generation desktop AI supercomputer, based on NVIDIA's DGX Spark concept — a device designed to deliver extraordinary AI capability in a remarkably small footprint.

This system is engineered to:

- Run very large modern AI models locally

- Support rapid prototyping and advanced experimentation with frontier-scale systems

- Enable powerful inference without dependency on the cloud

- Further future-proof Nerval's AI development pipeline

It represents the convergence of accessibility and power: supercomputer-class AI performance directly within Nerval's physical workspace, strengthening infrastructure sovereignty and accelerating innovation cycles.

Infrastructure as Strategy — and the Next Phase

For Nerval, infrastructure is not an afterthought — it is a strategic layer.

We have made a deliberate transition from depending primarily on rented cloud compute and externally hosted inference to building, owning, and operating our AI training, virtualization, and deployment environments in-house. This reflects the maturity of the work already completed and gives Nerval tighter control over performance, cost structures, data governance, and deployment timelines — all of which are essential for sovereign AI serving businesses, institutions, and communities.

Owning our compute stack positions Nerval for its next phase of growth. As we move from foundational system development to large-scale deployment, our infrastructure is designed to expand in lockstep with demand — supporting millions, and ultimately tens of millions, of users globally across languages, organizations, industries, and regions.

This investment marks a clear transition: from building the core systems that matter to operating and scaling them confidently. Inclusive, sovereign AI requires serious engineering — and with this infrastructure in place, Nerval is poised to serve businesses and communities worldwide with rigor, reliability, and purpose.

This is only the beginning.